Question 1

You have a Fabric tenant that contains JSON files in OneLake. The files have one billion items.

You plan to perform time series analysis of the items.

You need to transform the data, visualize the data to find insights, perform anomaly detection, and share the insights with other business users. The solution must meet the following requirements:

Use parallel processing.

Minimize the duplication of data.

Minimize how long it takes to load the data.

What should you use to transform and visualize the data?

- A. the PySpark library in a Fabric notebook

- B. the pandas library in a Fabric notebook

- C. a Microsoft Power BI report that uses core visuals

Answer:

a

Comments

Question 2

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have a Fabric tenant that contains a lakehouse named Lakehouse1. Lakehouse1 contains a Delta table named Customer.

When you query Customer, you discover that the query is slow to execute. You suspect that maintenance was NOT performed on the table.

You need to identify whether maintenance tasks were performed on Customer.

Solution: You run the following Spark SQL statement:

DESCRIBE HISTORY customer Does this meet the goal?

- A. Yes

- B. No

Answer:

a

Comments

Question 3

Case study This is a case study. Case studies are not timed separately. You can use as much exam time as you would like to complete each case. However, there may be additional case studies and sections on this exam. You must manage your time to ensure that you are able to complete all questions included on this exam in the time provided.

To answer the questions included in a case study, you will need to reference information that is provided in the case study. Case studies might contain exhibits and other resources that provide more information about the scenario that is described in the case study. Each question is independent of the other questions in this case study.

At the end of this case study, a review screen will appear. This screen allows you to review your answers and to make changes before you move to the next section of the exam. After you begin a new section, you cannot return to this section.

To start the case study To display the first question in this case study, click the Next button. Use the buttons in the left pane to explore the content of the case study before you answer the questions. Clicking these buttons displays information such as business requirements, existing environment, and problem statements. If the case study has an All Information tab, note that the information displayed is identical to the information displayed on the subsequent tabs. When you are ready to answer a question, click the Question button to return to the question.

Overview Contoso, Ltd. is a US-based health supplements company. Contoso has two divisions named Sales and Research. The Sales division contains two departments named Online Sales and Retail Sales. The Research division assigns internally developed product lines to individual teams of researchers and analysts.

Existing Environment

Identity Environment Contoso has a Microsoft Entra tenant named contoso.com. The tenant contains two groups named ResearchReviewersGroup1 and ResearchReviewersGroup2.

Data Environment Contoso has the following data environment:

The Sales division uses a Microsoft Power BI Premium capacity.

The semantic model of the Online Sales department includes a fact table named Orders that uses Import made. In the system of origin, the OrderID value represents the sequence in which orders are created.

The Research department uses an on-premises, third-party data warehousing product.

Fabric is enabled for contoso.com.

An Azure Data Lake Storage Gen2 storage account named storage1 contains Research division data for a product line named Productline1. The data is in the delta format.

A Data Lake Storage Gen2 storage account named storage2 contains Research division data for a product line named Productline2. The data is in the CSV format.

Requirements

Planned Changes Contoso plans to make the following changes:

Enable support for Fabric in the Power BI Premium capacity used by the Sales division.

Make all the data for the Sales division and the Research division available in Fabric.

For the Research division, create two Fabric workspaces named Productline1ws and Productine2ws.

In Productline1ws, create a lakehouse named Lakehouse1.

In Lakehouse1, create a shortcut to storage1 named ResearchProduct.

Data Analytics Requirements Contoso identifies the following data analytics requirements:

All the workspaces for the Sales division and the Research division must support all Fabric experiences.

The Research division workspaces must use a dedicated, on-demand capacity that has per-minute billing.

The Research division workspaces must be grouped together logically to support OneLake data hub filtering based on the department name.

For the Research division workspaces, the members of ResearchReviewersGroup1 must be able to read lakehouse and warehouse data and shortcuts by using SQL endpoints.

For the Research division workspaces, the members of ResearchReviewersGroup2 must be able to read lakehouse data by using Lakehouse explorer.

All the semantic models and reports for the Research division must use version control that supports branching.

Data Preparation Requirements Contoso identifies the following data preparation requirements:

The Research division data for Productline1 must be retrieved from Lakehouse1 by using Fabric notebooks.

All the Research division data in the lakehouses must be presented as managed tables in Lakehouse explorer.

Semantic Model Requirements Contoso identifies the following requirements for implementing and managing semantic models:

The number of rows added to the Orders table during refreshes must be minimized.

The semantic models in the Research division workspaces must use Direct Lake mode.

General Requirements Contoso identifies the following high-level requirements that must be considered for all solutions:

Follow the principle of least privilege when applicable.

Minimize implementation and maintenance effort when possible.

You need to ensure that Contoso can use version control to meet the data analytics requirements and the general requirements.

What should you do?

- A. Store at the semantic models and reports in Data Lake Gen2 storage.

- B. Modify the settings of the Research workspaces to use a GitHub repository.

- C. Modify the settings of the Research division workspaces to use an Azure Repos repository.

- D. Store all the semantic models and reports in Microsoft OneDrive.

Answer:

b

Comments

Question 4

HOTSPOT You have a Fabric tenant that contains a lakehouse.

You are using a Fabric notebook to save a large DataFrame by using the following code. df.write.partitionBy(year, month, day).mode(overwrite).parquet(Files/SalesOrder)

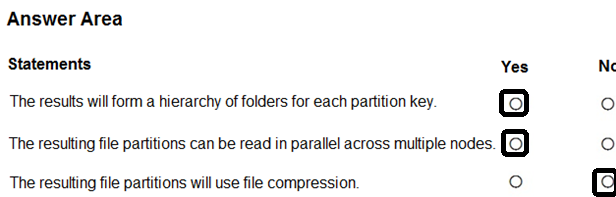

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Answer:

Comments

Question 5

HOTSPOT

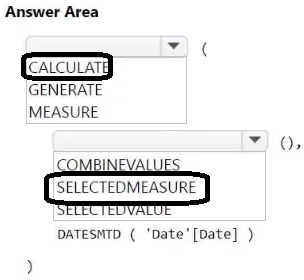

You have a Microsoft Power BI semantic model.

You plan to implement calculation groups.

You need to create a calculation item that will change the context from the selected date to month-to-date (MTD).

How should you complete the DAX expression? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Answer:

Comments

Question 6

You have a Fabric workspace named Workspace1 that contains a dataflow named Dataflow1. Dataflow1 returns 500 rows of data.

You need to identify the min and max values for each column in the query results.

Which three Data view options should you select? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

- A. Show column value distribution

- B. Enable column profile

- C. Show column profile in details pane

- D. Show column quality details

- E. Enable details pane

Answer:

abc

Comments

Question 7

HOTSPOT

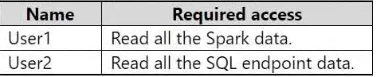

You have a Fabric tenant.

You need to configure OneLake security for users shown in the following table.

The solution must follow the principle of least privilege.

Which permission should you assign to each user? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Answer:

Comments

Question 8

You have a Microsoft Fabric tenant that contains a dataflow.

You are exploring a new semantic model.

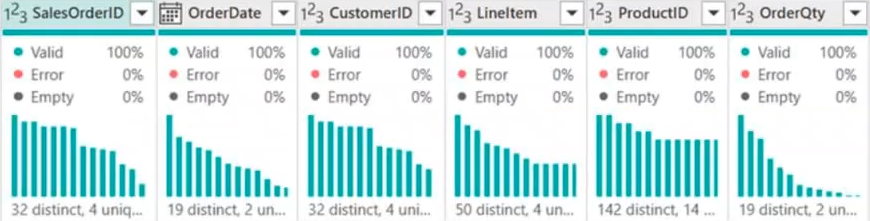

From Power Query, you need to view column information as shown in the following exhibit.

Which three Data view options should you select? Each correct answer presents part of the solution.

- A. Show column value distribution

- B. Enable details pane

- C. Enable column profile

- D. Show column quality details

- E. Show column profile in details pane

Answer:

bcd

Comments

Question 9

You have a Fabric notebook that has the Python code and output shown in the following exhibit.

Which type of analytics are you performing?

- A. descriptive

- B. diagnostic

- C. prescriptive

- D. predictive

Answer:

a

Comments

Question 10

You have a Fabric tenant that contains 30 CSV files in OneLake. The files are updated daily.

You create a Microsoft Power BI semantic model named Model1 that uses the CSV files as a data source. You configure incremental refresh for Model1 and publish the model to a Premium capacity in the Fabric tenant.

When you initiate a refresh of Model1, the refresh fails after running out of resources.

What is a possible cause of the failure?

- A. Query folding is occurring.

- B. Only refresh complete days is selected.

- C. XMLA Endpoint is set to Read Only.

- D. Query folding is NOT occurring.

- E. The delta type of the column used to partition the data has changed.

Answer:

d

Comments

Page 1 out of 10

Viewing questions 1-10 out of 108

page 2